Roblox, Nevada settle over child-safety standards

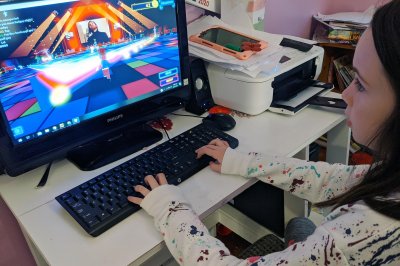

Sophia D’Eramo plays on the online game platform Roblox in 2020 in Franklin, Mass. The state of Nevada and Roblox reached a settlement to better protect young gamers, the Nevada attorney general said Wednesday. File Photo by Emily Flynn/EPA

April 15 (UPI) — Nevada and the online gaming platform Roblox have reached a unique settlement that will help protect young online gamers and pour money into the state’s youth programs, the state’s attorney general said Wednesday.

“This settlement will create a safer environment for our children online,” Attorney General Aaron Ford told reporters during a press conference. “I hope that it will serve as a bellwether for how online interactive platforms allow our state’s youth to use the products.”

Nevada opened an investigation into children’s safety on the popular online game creation platform in 2024. There have been lawsuits in that state and others alleging that Roblox has failed to protect young gamers from online predators and other issues.

As part of the settlement, Roblox will spend about $10 million on non-digital youth programs in the state, plus contribute toward an online safety awareness program.

In addition, the company will start using stricter age-verification measures, which will restrict what children under certain ages can see and with whom they can communicate. These measures will include facial age-estimation technology, robust parental controls, expanded parental oversight and dedicated law enforcement support.

Roblox has also committed to using government-issued ID for age assurance as well as behavioral monitoring to identify users who may have been assigned the wrong age, Ford said during the press conference.

Roblox will also include tighter controls for parents and a ban on encrypted messaging involving minors. If a parent account isn’t linked to a child account, the latter will be limited to a restricted child mode. Adults must have a “trusted friend” label, which requires parental consent, before they can chat with those under the age of 13. The changes will also include limits on notifications during nighttime hours.

Roblox told UPI in a statement that while it disputes the claims in the complaint it is “pleased” to have reached a settlement with Ford, stating it reflects the company’s “continued commitment to fostering online health and safety for kids.”

“Roblox is proud to have worked alongside Attorney General Ford to reach this landmark agreement, which builds on our work to establish a new standard for digital safety,” Roblox Chief Safety Officer Matt Kaufman said.

“This resolution creates a blueprint for how industry and regulators can work together to protect the next generation of digital citizens.”

Roblox told UPI that the agreement helped shape several safety measures, including two new age-based accounts announced Monday: Roblox Kids for users between the ages of 5 and 8 and Roblox Select for users ages 9 to 15.

Beginning in June, the accounts will “more closely align content access, communication settings and parental controls with a user’s age,” Roblox said Monday in a statement.