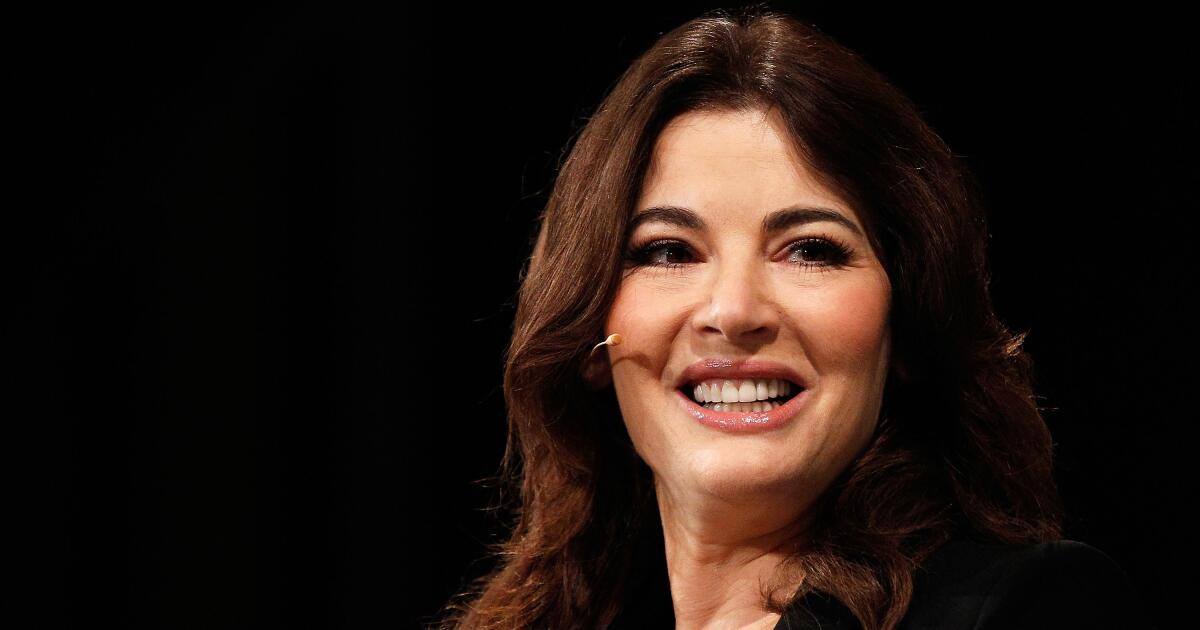

Nigella Lawson is ‘Great British Baking Show’s’ new judge

When “The Great British Baking Show” returns for another season later this year, the tent will welcome a new judge alongside the freshest batch of competitors.

British cookbook author and TV personality Nigella Lawson will join the beloved baking competition as a judge, succeeding Prue Leith, who announced her departure from the series last week. “The Great British Baking Show” (alternatively titled “The Great British Bake-Off” in the United Kingdom) unveiled Lawson’s appointment Monday on Instagram. She will co-judge alongside longtime “Bake Show” fixture and bread expert Paul Hollywood.

“I’m uncharacteristically rather lost for words right now!” Lawson said in a joint Instagram post. “Of course it’s daunting to be following in the footsteps of Prue Leith and Mary Berry before her, great dames both, but I’m also bubbling with excitement.”

“The Great British Baking Show” first aired on the BBC in 2010, with Hollywood judging competitors’ bakes alongside Mary Berry. Berry departed the series when it moved from the BBC to commercial broadcaster Channel 4 and Leith began her tenure in 2017.

During her “Baking Show” days, Leith became known among fans and competitors for her affinity for boozy bakes and colorful fashion and accessories. Notably, she and Hollywood co-judged the series in its 11th season, which was filmed and aired amid the COVID-19 pandemic.

Leith, announcing her exit, said “Bake Off has been a fabulous part of my life for the last nine years” and looked forward to a new chapter in her life.

“But now feels like the right time to step back (I’m 86 for goodness sake!), there’s so much I’d like to do, not least spend summers enjoying my garden,” she wrote, adding later in her caption that she believes her successor will “love [the show] as much as I have.”

Lawson, a former journalist and Margaret Thatcher cabinet member Nigel Lawson’s daughter, comes to “Baking Show” with some history with Channel 4. The broadcast aired her series “Nigella Bites” in the late 1990s and early aughts in tandem with the release of her book of the same name.

Her television credits also include hosting her series “Nigella Feasts,” “Nigella Express,” “Nigella Kitchen” and “Nigellissima” and judging on shows “Iron Chef America,” “The Taste” alongside Anthony Bourdain and “MasterChef Australia,” among others.

She has penned more than a dozen books, most recently 2020’s “Cook, Eat, Repeat.”

“The Great British Bake Off is more than a television programme, it’s a National Treasure – and it’s a huge honour to be entrusted with it,” she said on Monday. “I’m just thrilled to be joining the team and all the new bakers to come. I wish the marvellous Prue all the best, and am giddily grateful for the opportunity!”