CFO Risk Management in a Fractured Global Order

Looking ahead to the second half of the year, corporate finance chiefs are hardwiring contingency into strategy.

Global corporate finance leaders are entering the second half of 2026 facing the most complex operating environment of the post-pandemic era, requiring them to balance cost discipline, technology investment, and capital deployment against a backdrop of geopolitical volatility and renewed energy uncertainty.

At the center of that uncertainty is the Strait of Hormuz. Normally a conduit for around 20% of global oil and liquified natural gas (LNG) exports, the strait has remained largely blocked since war broke out in the Middle East in late February.

The conflict has added a new shock layer to an environment that was already fragile as a result of tariff turbulence, weakening demand, and declining consumer confidence.

The consequences for corporate finance professionals are direct and serious, forcing teams into defensive mode: conserving cash, deferring capital investment, and stress-testing portfolios against prolonged geopolitical disruption.

Macro Shocks Add Strain

Cost pressures were already elevated before the war, and are continuing their upward trajectory. According to the ACCA and IMA Global Economic Conditions Survey (GECS), the further rise likely reflects some early impacts of the surge in energy and other commodity prices since the outbreak of hostilities in the Persian Gulf. Among the CFOs surveyed, the proportion reporting increased operating costs eased slightly in the first quarter of 2026, but remains high by historical standards.

Confidence across finance teams, meanwhile, fell sharply in the first quarter, taking sentiment to a low point previously seen only at the onset of the Covid-19 pandemic in 2020. Since the GECS survey was conducted in the first half of March, the outbreak of hostilities would have been a major factor weighing on sentiment, owing to the surge in geopolitical uncertainty and the price jump in energy and some other commodities.

Logistics and energy are the most immediate concerns, according to findings of the Allianz Trade survey of 6,000 companies across 13 major economies: 60% said they are worried about supply chain disruption and rising commodity prices, with concern running highest in Vietnam, Poland, the UK, and the U.S.

One consequence of the war-induced shocks is that businesses are holding more inventory, adding to liquidity demand at precisely the moment rates are falling more slowly than expected, if at all.

Beyond Hedging

When it comes to sustaining readiness in the months ahead, Naresh Aggarwal, associate director, Policy and Technical at the Association of Corporate Treasurers, says the framework is simple: “plan for the worst, hope for the best.” In practice, this means larger, more committed credit facilities, greater use of derivatives, and hedge duration adjusted to circumstances.

The effects of the war are extending far beyond the energy, shipping, and chemical manufacturing sectors. Alex Ashby, group treasurer at WPP, says the ongoing volatility has driven material change at the global media company.

“Geopolitical volatility has led us to materially step up our focus on foreign exchange risk management,” he notes. “We have invested heavily in training across the organization to raise capability and accountability and introduced new monitoring and reporting so that FX exposures and outcomes are reviewed regularly at executive and board level. Alongside more frequent liquidity stress-testing, this ensures risks are identified earlier, decisions are taken closer to the underlying exposure, and we remain agile as conditions evolve.”

The world remains deeply interconnected, says Raphael Savalle, CFO at Montblanc, and so shocks travel fast and wide. Businesses are no longer operating in a world where companies can remove volatility by hedging, but one where operating models must be built to absorb it.

“This isn’t going away; if anything, it’s increasing,” he says. “It’s the butterfly effect, times 10. The key is to maintain long-term strategic direction while also building agility into how you operate – what I call dynamic P&L management, or dynamic resource allocation – and still be on the lookout every day for risks that may not at first seem relevant but turn out to be, because of the way the world is connected.”

What impact will this level of uncertainty have on the day-to-day in the coming months? Beyond a structured routine of information exchange, it demands the confidence to be candid about these less-obvious risks.

Reassessing the Tech Arsenal

The challenges of the coming months are also prompting some companies to review their technology needs. ERP systems are still the backbone of corporate finance, but their rigidity is fueling demand for smarter, more flexible tools to augment them.

Enterprise Performance Management (EPM) platforms are emerging as a viable contender, says Armand Angeli, AI and automation specialist and vice president of the Digital Transformation and AI Group at DFCG, the French network of CFOs, broadening their scope beyond finance to cover sales, purchasing, and logistics.

Major ERP transformation projects are stalling as companies wrestle with legacy integration, Angeli says; bridging old and new without discarding existing investment remains the central challenge.

“We can’t just abandon ERP,” he says. “We have to create bridges or APIs between AI tools and all the ERPs. So the question becomes, How do you create these bridges? It’s not easy.” While ERPs can be inflexible, they are still valuable tools, “thought through by experts, for CFOs.”

While the major ERP providers are working to embed AI in their offerings, corporate users are taking different routes, depending on individual views and budgets. In practice, then, AI adoption by corporate finance teams is advancing with extreme caution.

“If the pace of change for these tools is 100, the pace of change among individuals is 10, and for companies, it’s 1,” Angeli observes.

Predictive AI, built on auditable algorithms, has earned trust as a tool for reconciliations, fraud detection, and cash posting, while generative AI remains a source of deep skepticism. Hallucinations, compliance failures, and the risk of over-reliance are tangible concerns.

“We now see more and more suspicious posting, more and more duplicate payments,” says Angeli.

Agentic AI is further still from meaningful deployment, he adds: “CFOs don’t trust agentic AI. And given that studies show that hallucinations account for between 30% and 70% of Gen AI output, we don’t trust Gen AI, either. Maybe 1% or 2% of companies can say they have agents working.”

Aggarwal concurs, observing that corporate finance teams remain in the exploratory phase when it comes to AI, but with purpose. Companies are mandating structured upskilling; One treasury team of his acquaintance dedicates half a day every other week to some form of AI-related upskilling or evaluating AI processes, he says.

Data Integrity

The priority for the second half of this year, however, will be data integrity and learning which insights are genuinely actionable, Aggarwal predicts; truly agentic AI is a story for 2027.

“The word I hear a lot in these circles is trust: trusted data, trusted algorithms, trusted outputs, trusted use of the outputs,” he says. Going forward, the deeper cultural question of if and when to remove the human from the loop will become harder to avoid as, presumably, AI systems accumulate error-free track records.

Progress may be cautious for now, but Gartner estimates that CFOs who get AI deployment right could unlock 10 additional margin points by 2029. It won’t be isolated pilots that deliver returns, however; the gains will come from managing technology as a portfolio. Three quarters of CFOs are already raising technology budgets for 2026, the research firm finds, with nearly half boosting them by 10% or more.

Quantifying return on investment is difficult for the majority of AI-based projects, however, and will continue to be so through this year, Angeli predicts: “We know that we have to implement AI and hope for financial ROI in the future, but most companies are not seeing it yet.”

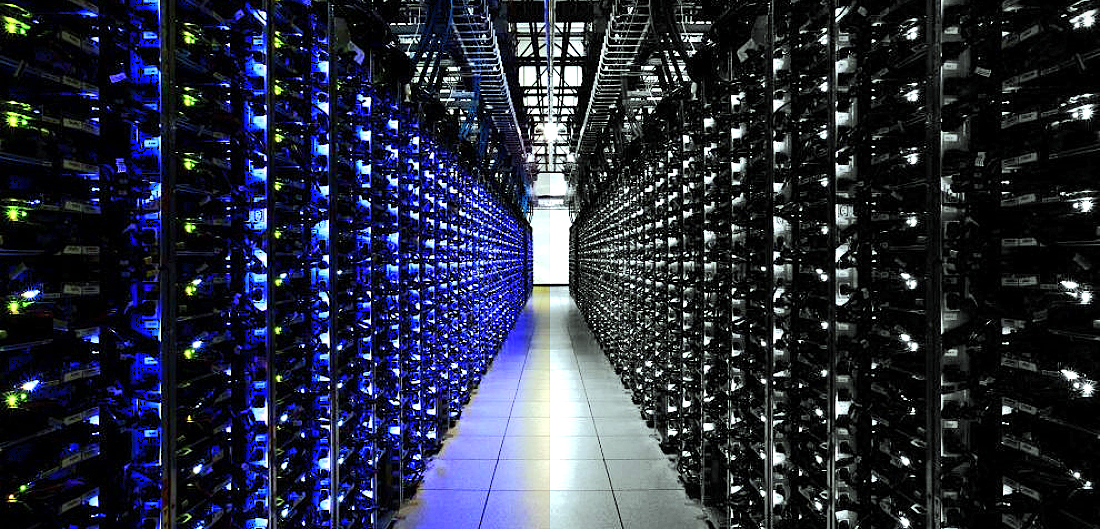

Another aspect of the technology challenge that is intrinsically linked to wider geopolitical developments, says Montblanc’s Savalle, is digital sovereignty, or a nation’s ability to control, secure, and regulate its entire infrastructure: in accordance with its laws, but also its strategic interests. Different approaches to the governance of these technologies and the accompanying data have deepened geopolitical competition between the U.S., China, and the EU, according to the World Economic Forum.

“Many governments are now insisting that data centers sit within their own borders,” Savalle warns, “and increasingly, they’re looking at software dependency more broadly: not just AI, but email systems, video conferencing tools, the whole stack. As a CFO, you have to consider what that means for your IT architecture.” Under these circumstances, will the old ambition of a single global ERP still be viable in five years’ time? He is not so sure.

Permanent Contingency Thinking

Whether physical war or digital friction, geopolitical risks are forcing the finance function into a state of permanent contingency thinking. The closing of the Strait of Hormuz is an extreme case, but it sits within a pattern that was already familiar to CFOs and treasurers. The post-Covid supply chain collapse, the Russia-Ukraine war’s impact on energy and commodities, the Red Sea disruptions of 2024–25 — each forced treasury teams to rethink counterparty risk, liquidity buffers, FX exposure, and supply chain financing.

What’s different this time is that finance leaders are no longer treating the shocks as exceptional.

Aggarwal sees the broader geopolitical realignment as structural rather than cyclical, and doubts even a change in US administration can reverse it: “The genie is out of the bottle around using trade as a way of imposing sovereignty.” Looking ahead, he foresees continued pressure on the finance function to operate against a challenging backdrop.

“What I understand from my CFO network is that there is no going back,” Savalle observes. “This is the new normal, and, if anything, it will continue and expand. So the question is about how you adapt your operating model. Make sure that you get that feedback loop and keep an open mind, because you are going into uncharted territory. Things used to work in a certain world order. This is changing.”

For corporate finance leaders, the priority is no longer waiting for stability to return, but operating effectively in its absence. While keeping to a long-term strategy is vital, so is reconsidering some of the operating model assumptions that a world divided into regional blocs is calling into question. That could include maintaining higher liquidity buffers, diversifying supply chains geographically, stress-testing cash flow forecasts against energy price scenarios, and investing in planning and forecasting tools that allow the organization to model disruption faster.

For the corporate finance function, these are no longer crisis measures, but the baseline.

This article appears in the June 2026 issue of Global Finance Magazine.